Insights from ai-PULSE 2025: Building for Real-world Deployment

At ai-PULSE 2025, leaders across industries showed what happens when AI is deployed into real-world environments. This article brings together the key insights from these sessions.

AI progress is no longer a story about text alone.

At ai-PULSE 2025, leaders across text translation, image generation, voice, and video examined how each modality is hitting distinct technical limits, and calling for new design choices in response. From the plateau of autoregressive transformers to lightweight open-source diffusion models, from production-ready European voice systems to edge-native video architectures, the focus is shifting from brute-force scale to smarter engineering.

This article brings together the key insights from these sessions, highlighting how multimodal AI is evolving — and what it takes to turn cutting-edge research into dependable, real-world systems.

In this session, Marco Trombetti, Co-Founder and CEO of Translated, drew on more than two decades of production-scale translation to examine the limits of today’s autoregressive transformers. Translation, he argued, is an unusually strict benchmark: unlike most LLM use cases, errors are immediately exposed: “If you hallucinate a little bit in an LLM, nobody will discover it; if you hallucinate in translation, you make everyone laugh.”

Using years of human-in-the-loop correction data, Trombetti showed that progress in machine translation quality has slowed for the first time since 2010. Instead of relying on abstract benchmarks, Translated measures improvement by the time professional translators need to correct AI output. After years of steady decline, this curve has flattened, pushing the projected “human singularity” — when a translation requires no correction — into the early 2030s. For Trombetti, this slowdown points to architectural limits rather than missing data or compute.

He framed language as “the most profound manifestation of human intelligence,” and explained why translation reveals what transformers still struggle with: ambiguity introduced by tokenization, the inability to reason and decode simultaneously, and learning constrained to past human data. To address these limits, Translated is developing DVPS, an open foundation model designed to rethink tokenization, enable parallel reasoning in latent space, and support learning through experience during inference. (▶️ Watch session in full)

David Bertoin, Research Scientist, Photoroom

Jon Almazán, Research Scientist, Photoroom

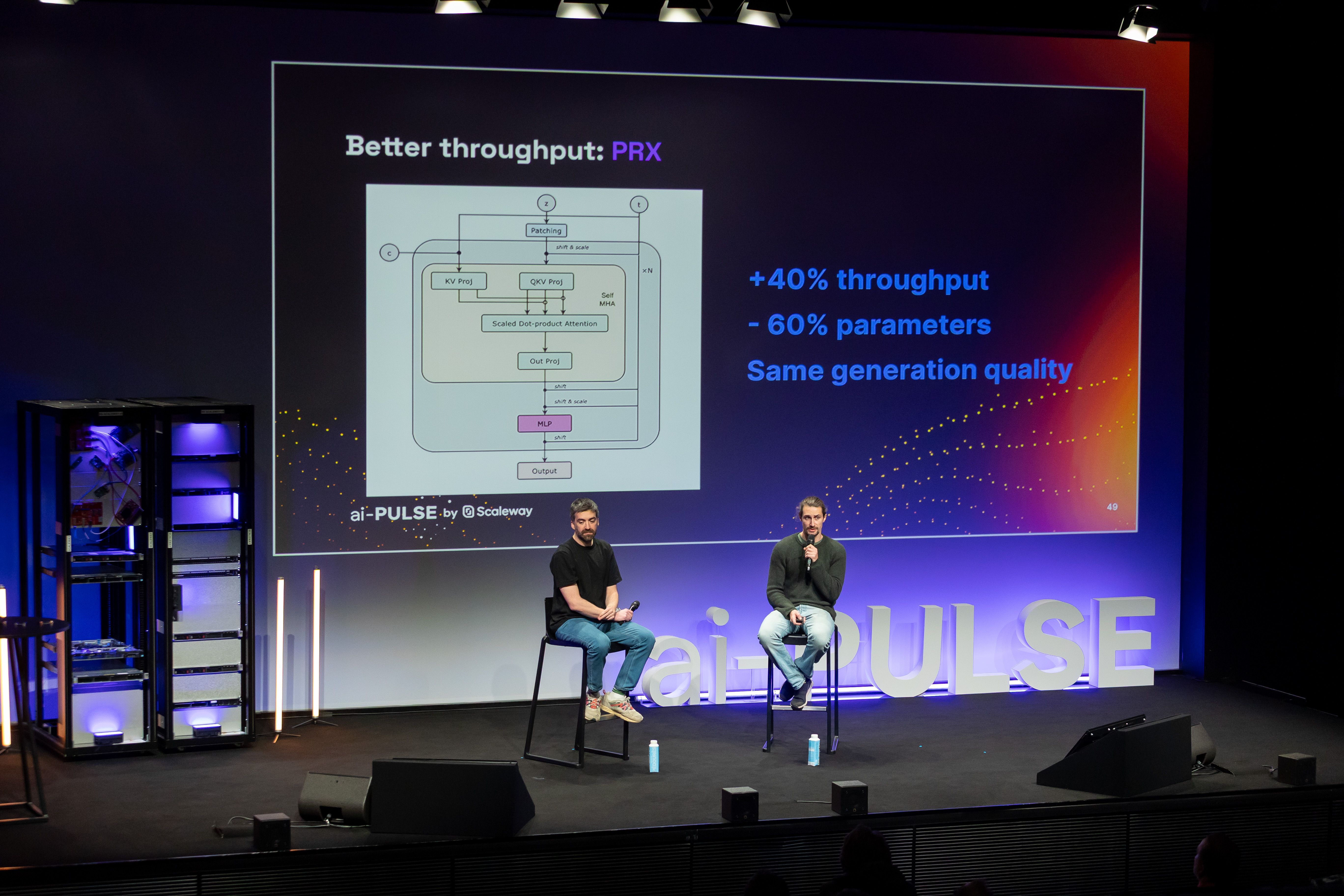

Photoroom’s session offered a look behind the scenes of training a text-to-image foundation model from scratch — and why doing so openly can be a technical advantage. The speakers demystified the apparent “magic” of diffusion models with hard numbers: after a few hundred GPU hours, “the model doesn’t even know what’s a shape,” while realistic images only emerge after tens of thousands of GPU hours. This cost and friction pushed Photoroom to rethink both scale and process.

Research Scientists David Bertoin and Jon Amazan introduced PRX, a lightweight 1.2-billion-parameter text-to-image model designed to run on consumer GPUs and released under a permissive Apache 2.0 license. Photoroom chose to open not just the weights, but the entire development trail: “We are sharing all the experiments, all the ablation studies, everything we’ve done — what worked, and what didn’t.”

A key acceleration came from rethinking data rather than brute force. By recaptioning images with far more detailed prompts using vision-language models, PRX learns disentangled concepts faster and avoids the usual pre-training and fine-tuning trade-offs. Combined with architectural choices that made the model significantly lighter and faster, this approach enabled Photoroom to reach high-quality image generation orders of magnitude sooner than with its previous models. This turned openness and iteration speed into core advantages. (▶️ Watch session in full)

Enrico Bertino, Chief AI Officer, indigo.ai

Alexandre Défossez, Chief Exploration Officer, Kyutai

Constance Morales, PMM AI Products, Scaleway

Europe’s AI ecosystem is increasingly defined by its ability to translate open research into production-ready systems. This session, moderated by Constance Morales, PMM AI Products at Scaleway, brought together Kyutai and indigo.ai to examine what that shift looks like in practice, from foundational models to deployed conversational products.

Both speakers emphasized that moving from lab to production is less about scaling model size than about engineering discipline: adapting architectures for latency and cost constraints, fine-tuning multilingual models for real dialogue, and ensuring consistent behavior in real-world settings. At indigo.ai, this results in domain-specific conversational systems where data governance, privacy, and robustness are first-order design constraints.

For Kyutai, openness is central to this transition. As the company’s Chief Exploration Officer Alexandre Défossez put it, “What we’ve created with Moshi is not just a voice model, but a platform on which developers can innovate.” Publishing architectures, weights, and research artifacts enables others to adapt and extend the work, accelerating collective progress.

Both companies converged on a pragmatic view of sovereignty: maintaining control over data, understanding how models behave, and retaining the ability to adapt them locally. Open models may be free to use, but their actual impact depends on being turned into reliable, efficient products where research rigor meets production reality. (▶️ Watch session in full)

Olivier Reynaud, CEO, AIVE

Dam Mulhem, Co-founder & Head of Product, XXII

Paul Mochkovitch, Co-founder, Molia

This conversation focused on what it actually takes to deploy video AI systems under real-world constraints. Rather than chasing ever-larger models, the discussion centered on latency budgets, cost ceilings, and reliability requirements that emerge once systems leave the lab.

The three speakers described a clear architectural shift: inference must increasingly happen at the edge, close to cameras and sensors, with only the most relevant signals propagated upstream into generative pipelines. This forces difficult trade-offs — between accuracy and speed, generality and specialization — but enables scalable, production-grade deployments.

At AIVE, this meant redesigning video analysis around temporal efficiency and selective computation. As CEO Olivier Reynaud put it, “Imagine analyzing a two-hour video taking an entire day. By optimizing our models, primarily open source, fine-tuned to focus only on key data, we’ve achieved real-time processing.” The quote encapsulated the session’s core message that usefulness is defined by turnaround time.

XXII highlighted similar constraints in security and urban vision, where edge deployment is not an optimization choice but a prerequisite: systems must operate deterministically, tolerate degraded conditions, and deliver consistent outputs without reliance on continuous cloud access. This sharply limits acceptable model complexity and favors tightly controlled inference stacks. Molia extended the discussion to generative workflows, arguing that video AI only delivers value when inference, generation, and post-processing are tightly orchestrated as a single system. (▶️ Watch session in full)

On December 4, 2025 we hosted the third edition of ai-PULSE, Europe's premier AI conference.

With 1,600+ people gathered at STATION F in Paris and thousands more joining online, this was our biggest and most ambitious edition yet — a place where leading researchers, founders, and builders from Europe and beyond came to explore where AI is heading next.

If you couldn’t follow everything live, our white paper captures the key takeaways from across 30+ sessions into one structured recap.

At ai-PULSE 2025, leaders across industries showed what happens when AI is deployed into real-world environments. This article brings together the key insights from these sessions.

A look inside the breakthrough ideas and announcements that defined ai-PULSE 2025 — from world models to robotics, voice AI, and Europe’s growing momentum.