How Scaleway brought the first RISC-V servers to the cloud

In 2013, Scaleway was one of the first cloud providers to offer ARM bare metal instances — physical servers directly accessible without virtualization. Eleven years later, we did it again by launching the world’s first dedicated RISC-V server offering.

But how do you turn an ISA architecture into a fully operational cloud server? Here is a look behind the scenes of this industry first: from the instruction set itself to the hardware design choices that made it possible.

The rise of the RISC-V architecture

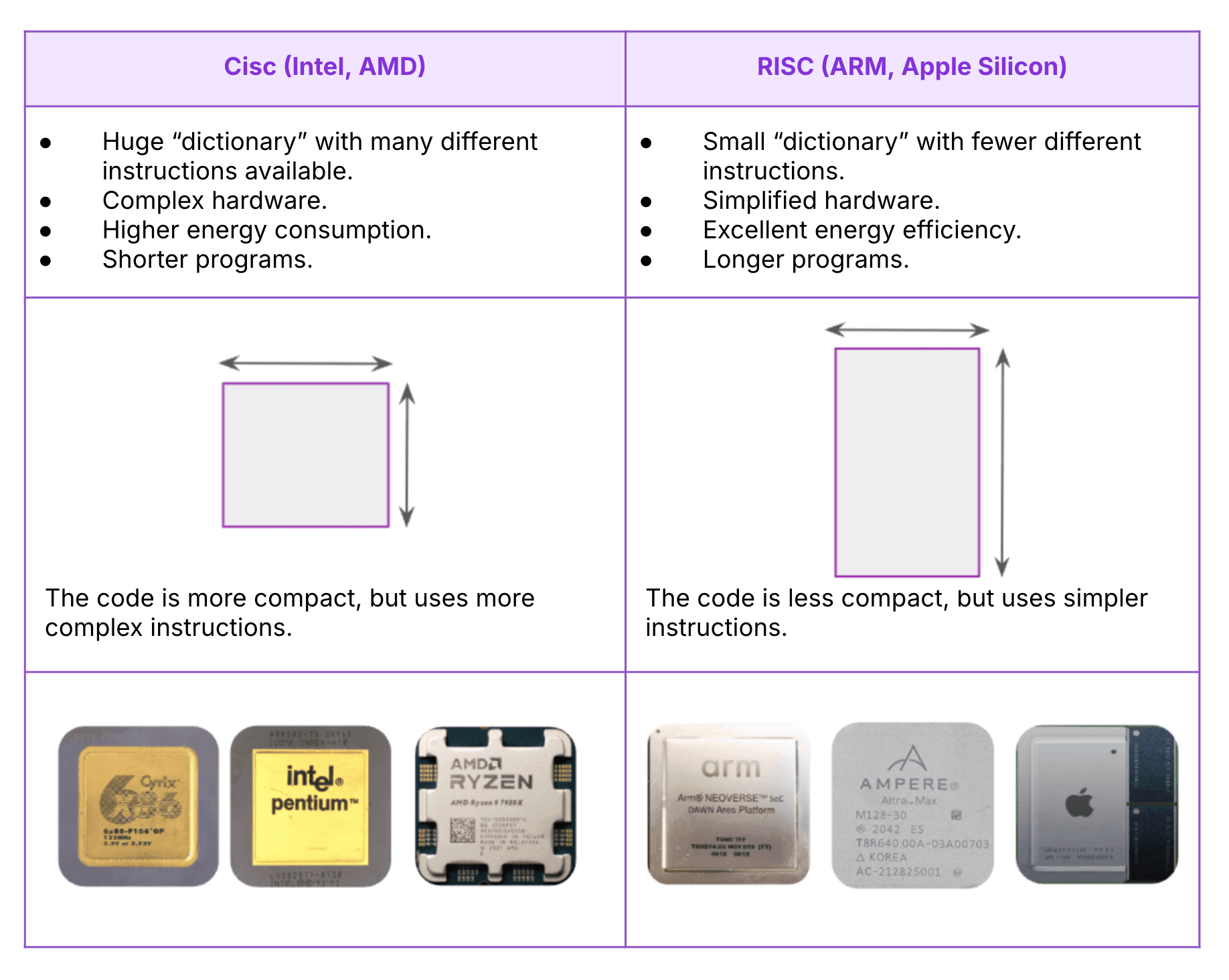

For decades, the processor world has been dominated by CISC (Complex Instruction Set Computing) architectures, with giants such as Intel and AMD shaping modern computing.

These architectures, designed to execute complex instructions with as few lines of code as possible, drove the development of laptops and servers. But with the rise of mobile phones in the 2000s, they proved less well suited, as they consumed more power and offered less flexibility for new use cases.

In response to these limitations, RISC (Reduced Instruction Set Computing) architectures, which had first emerged in the 1980s, made a strong comeback. Thanks to simpler instructions that processors can execute more quickly, they allow developers to optimize both energy consumption and performance.

ARM in particular helped bring RISC back into the mainstream for consumer applications, with lighter, more energy-efficient processors suited to smartphones and connected devices.

Today, RISC-V goes one step further by offering an open-source instruction set — the machine language understood by the processor. Unlike proprietary architectures such as x86 or ARM, this ISA (Instruction Set Architecture) is free and open to everyone, requires no licensing, and can be extended or modified freely.

The RISC-V revolution and its impact

Launched in 2010 at the University of California, Berkeley, RISC-V builds on the legacy of earlier RISC architectures.

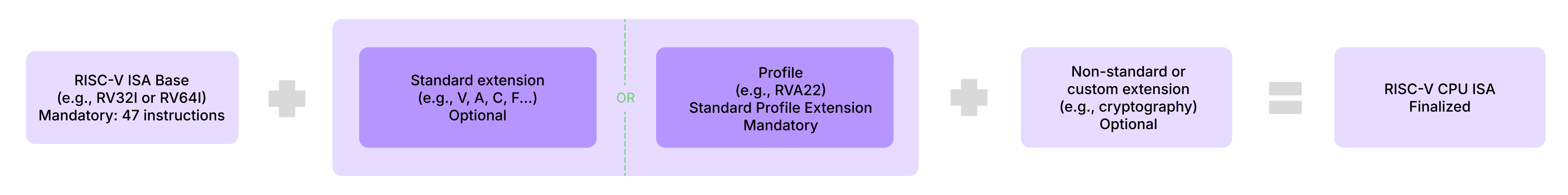

Its core principle is the modularity of the instruction set. Whereas previous generations (RISC I through IV) focused primarily on performance, RISC-V emphasizes flexibility. Designers can add extensions to tailor their processors to specific uses: vector computing, AI processing, encryption, and more.

While this approach makes it possible to design more specialized hardware, it also comes with drawbacks: every combination of extensions creates a new variant of the machine language. In other words, a program compiled for one configuration may not run on another. This fragmentation is now a concern for part of the community: Linus Torvalds, for example, has criticized the growing number of RISC-V variants.

To address this issue, the RISC-V Foundation introduced standardized profiles such as RVA23, which define a common baseline of mandatory instructions and extensions that manufacturers must implement. These profiles give developers a stable foundation and ensure that the same software can run on different processors without recompilation.

When we say RISC-V is open source, it is important to distinguish between the ISA itself — the standard, which is open — and its physical implementation, which is not necessarily so. In most cases, the internal processor design and its development code (HDL) remain proprietary. Manufacturers therefore keep their trade secrets and business models while still following the public “grammar” imposed by the profile.

Without that shared baseline, the system would either have to emulate missing instructions — at the cost of extremely poor performance — or simply be unable to perform certain tasks that require specific hardware support, such as virtualization.

RISC-V is therefore at a turning point: balancing the promise of an open, customizable ISA with the challenge of maintaining a coherent and compatible ecosystem.

The above diagram gives a sense of the “recipe” used to compose a RISC-V ISA: each letter corresponds to a standard or specialized extension that makes a given ISA unique. Take the SiFive U74, for example, used in the HiFive Unmatched development board.

Behind this ISA lies an “RV64GC (RV64IMAFDC),” which indicates a 64-bit architecture (RV64), with extensions for multiplication (M), atomic instructions (A), floating point (F and D), and compressed instructions (C). Who would have thought choosing a CPU could be this complex?

What challenges did Scaleway overcome to launch the first RISC-V Bare Metal offering?

Over the past few years, the RISC-V ecosystem has continued to grow and is being adopted more and more by industrial players. RISC-V is now supported by major projects such as Android and the Linux kernel, and it is even expected to be used in future NASA missions. The RISC-V Summit, the ecosystem’s flagship event, now brings together not only the historical founders and open-source community, but also cloud and semiconductor giants. It's a far cry from the early, low-profile conferences.

At Scaleway R&D, we had anticipated this momentum and set out to design the first cloud offering of RISC-V instances. But adapting the RISC-V hardware available at the time to the constraints of cloud computing turned out to be a real challenge.

Project genesis

Our goal was clear: launch the world’s first RISC-V Bare Metal offering. The challenge? Use boards that were not designed for datacenters and turn them into servers capable of powering the first RISC-V cloud offering.

Fortunately, Scaleway already had a complete infrastructure in place to provision Bare Metal servers. All that remained was to integrate this new architecture into our existing software environment.

Here is how that journey began, and how Scaleway is now contributing to the RISC-V revolution.

Identifying the right SoCs and manufacturers

The first step was to assess what hardware could actually be used. We began by identifying the key players, suppliers, and manufacturers. At the time, the options were limited, but two SoCs (systems on a chip) stood out:

- TH1520, developed by T-Head (Alibaba Group’s semiconductor arm), powered by a Xuantie C910 processor.

- JH7110, developed by StarFive Technology, powered by a SiFive U74 processor.

For performance reasons, we chose the TH1520. Our benchmarks on the VisionFive 2 board showed that the TH1520 delivered more computing power than the JH7110 SoC, which was essential for production use cases.

The first challenge: booting the boards

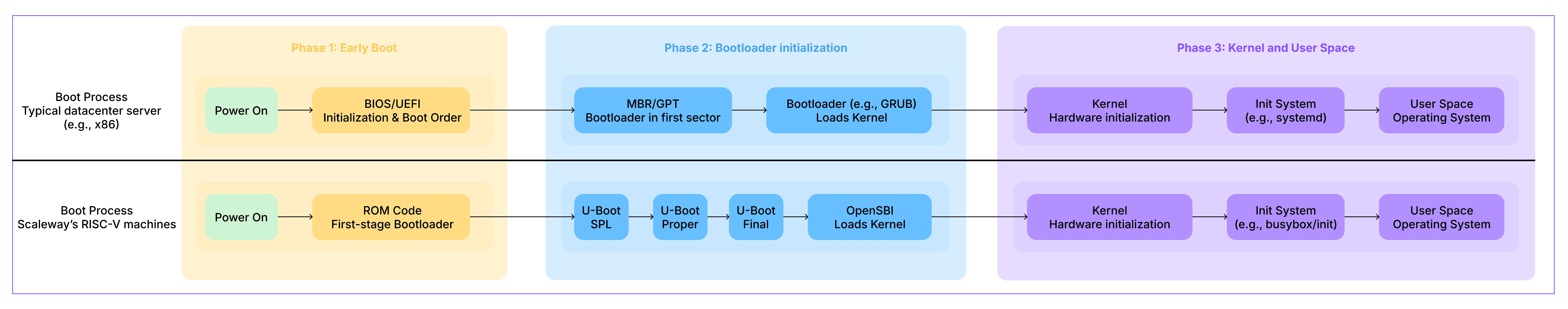

At the time of our research, there was no server-style motherboard compatible with UEFI boot for RISC-V boards, unlike in the x86 world. We had to work with a boot process inherited from embedded systems — and very unconventional for a datacenter.

The challenge was far from trivial: we had to get the boards to boot all the way into Linux user space. The diagram below illustrates the differences and similarities between the boot process of standard datacenter servers and that of our RISC-V machines.

That said, the offering is becoming richer as the RISC-V ecosystem matures. For example, the Milk-V Titan supports both UEFI and virtualization, but it is not yet commercially available. The contrast is similar to ARM, which supports both server-style boot processes (UEFI, GPT) and lighter boot flows (ROM + U-Boot) for embedded systems.

Booting an operating system from manufacturer-provided images is relatively straightforward, but that is not enough for cloud integration: in a cloud environment, the machines must also be able to boot over the network. Several options were available to us — PXE, iPXE, and UEFI HTTP Boot — but we quickly found that only PXE actually worked. At the time, neither iPXE nor UEFI had been ported to this architecture.

We therefore managed to boot into a minimal Linux system (BusyBox), and then launch a Debian distribution. This confirmed that the boards were mature enough to be used in a real offering.

We use two chained U-Boot stages: a first, minimal one, fixed in eMMC, which ensures a stable and immutable boot, followed by a final U-Boot loaded afterward, which we can update over time. This separation prevents any unintended client-side changes while still allowing us to update the bootloader.

In the end, the full boot process (which cannot be modified by the customer) is as follows:

- Server startup

- Execution of the minimal proprietary code that loads the first-stage bootloader

- Execution of the first-stage bootloader (U-Boot SPL), responsible in particular for loading the second-stage bootloader

- Execution of the second-stage bootloader (U-Boot Proper), and loading of the final bootloader

Up to this point, the process is standard for an embedded environment. The next part, however, can be modified by the customer:

- Execution of the final bootloader (again U-Boot Proper, but configured differently), which loads OpenSBI and the Linux kernel

- Initialization of OpenSBI, the reference implementation of the SBI specification for the RISC-V architecture, acting as a firmware abstraction layer between hardware and the OS. It allows the kernel to communicate with the hardware through a standardized interface for privileged operations

- Linux kernel startup

At that point, we could boot RISC-V servers. The next step was to integrate them into our datacenters.

Hardware design: from prototype to rack-mount chassis

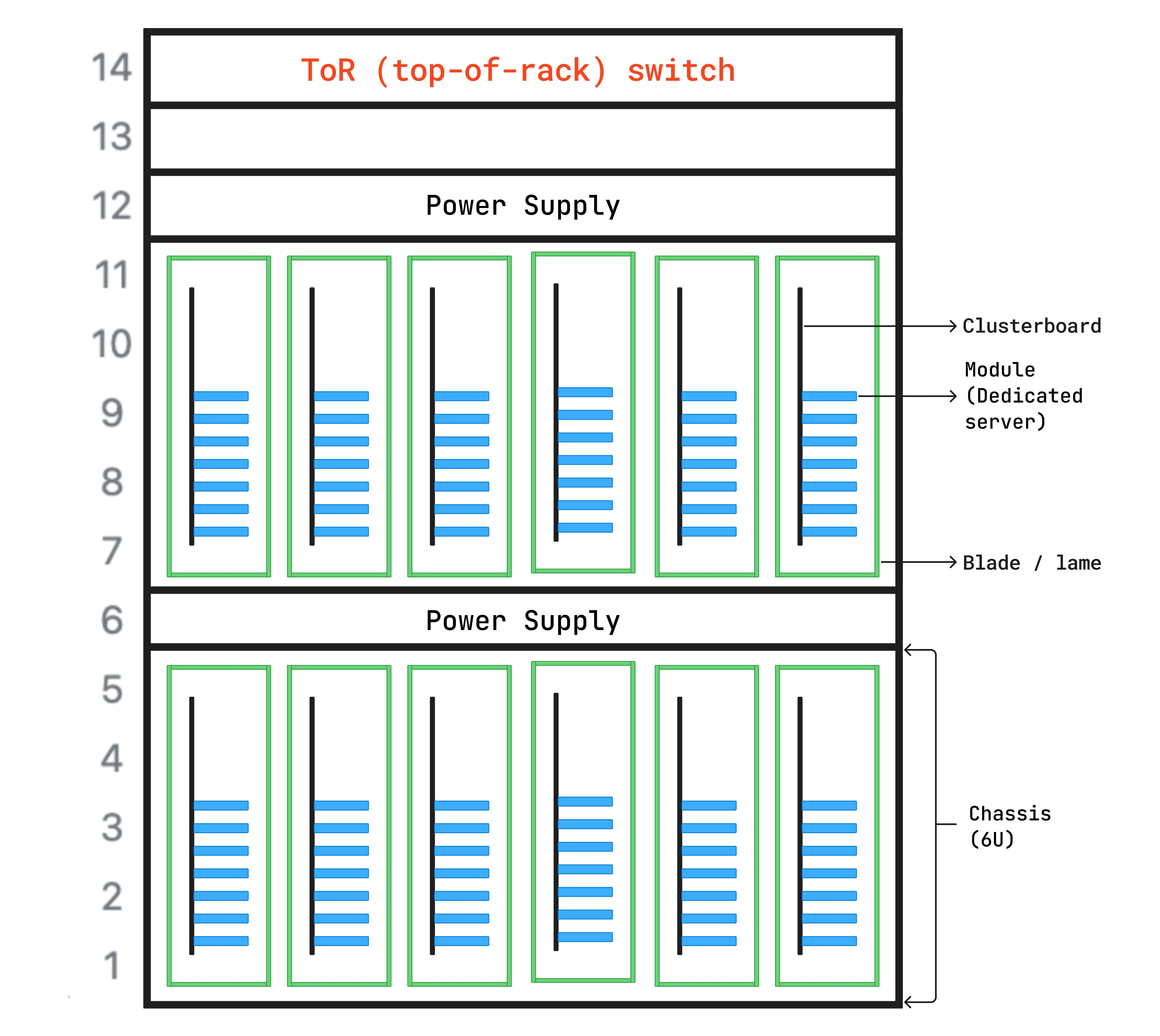

To understand our integration challenge, it helps to recall how a datacenter is physically structured:

- Rack: a standardized metal cabinet divided into standard size units (often 52U high, where 1U = 1.75 inches, or about 4.45 cm), allowing servers, switches, and power equipment to be stacked in an optimized space with centralized airflow and power.

- Chassis: a metal enclosure mounted in the rack, directly housing the hardware (CPU, RAM, disks) or allowing removable components to be inserted (drives or even servers in blade format).

- Blades: removable trays inserted into the chassis, allowing a server to be replaced without shutting everything down.

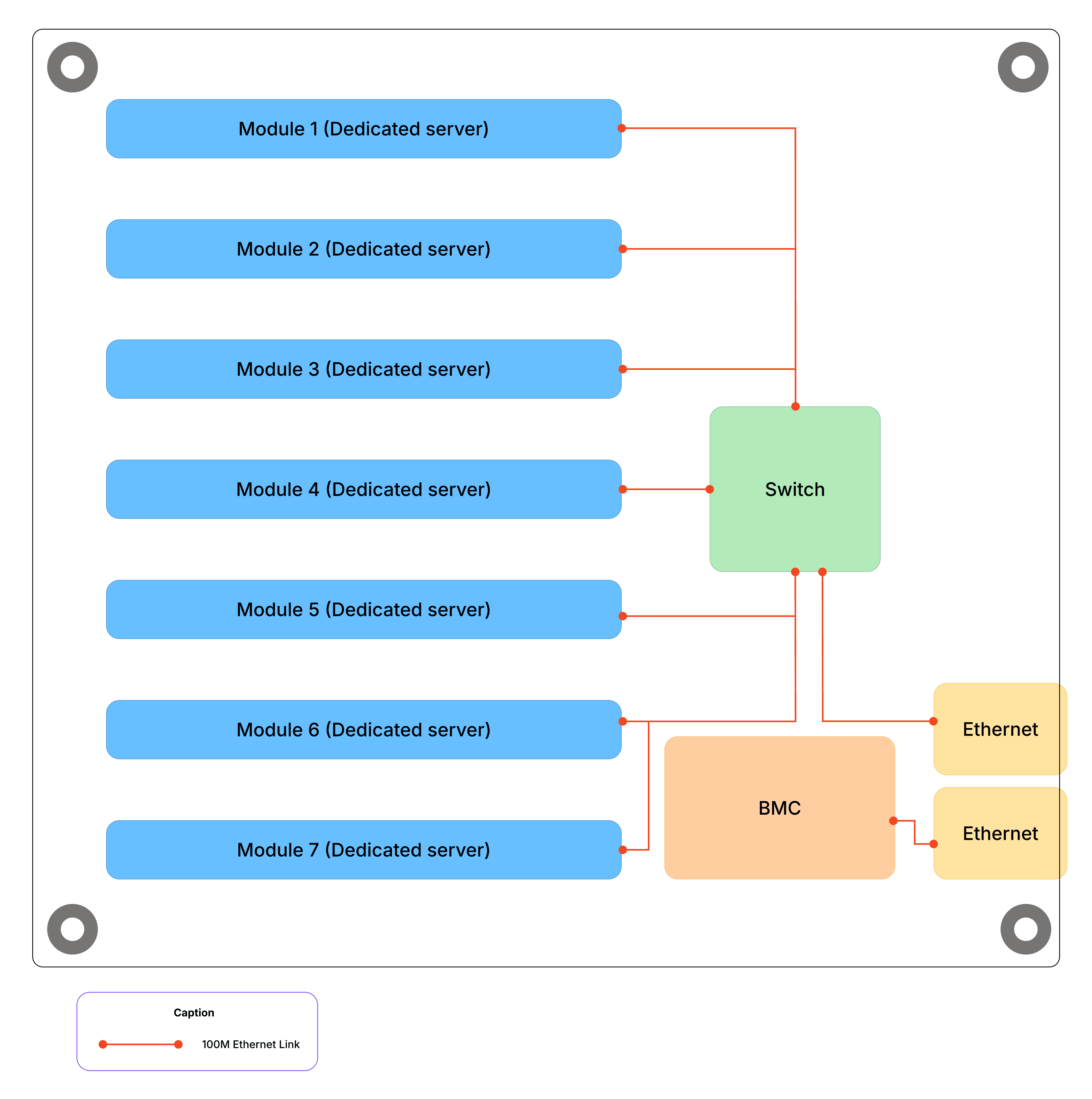

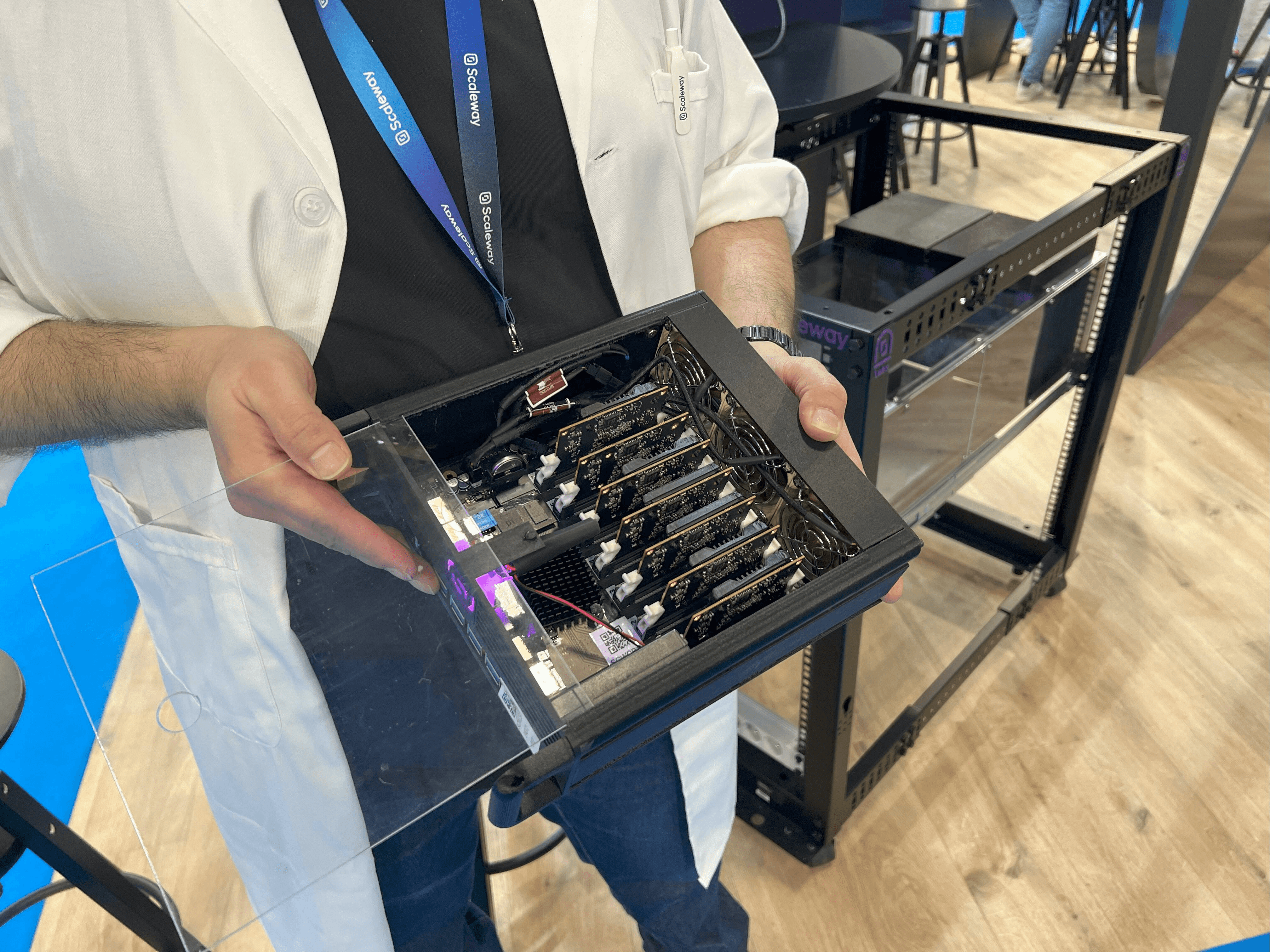

We chose blades, each containing a clusterboard — a motherboard grouping mini-servers in removable module form — with 7 dedicated RISC-V servers.

This approach enables high server density. We can fit 12 blades of 7 servers each into a 5U chassis, plus 1U for power supply.

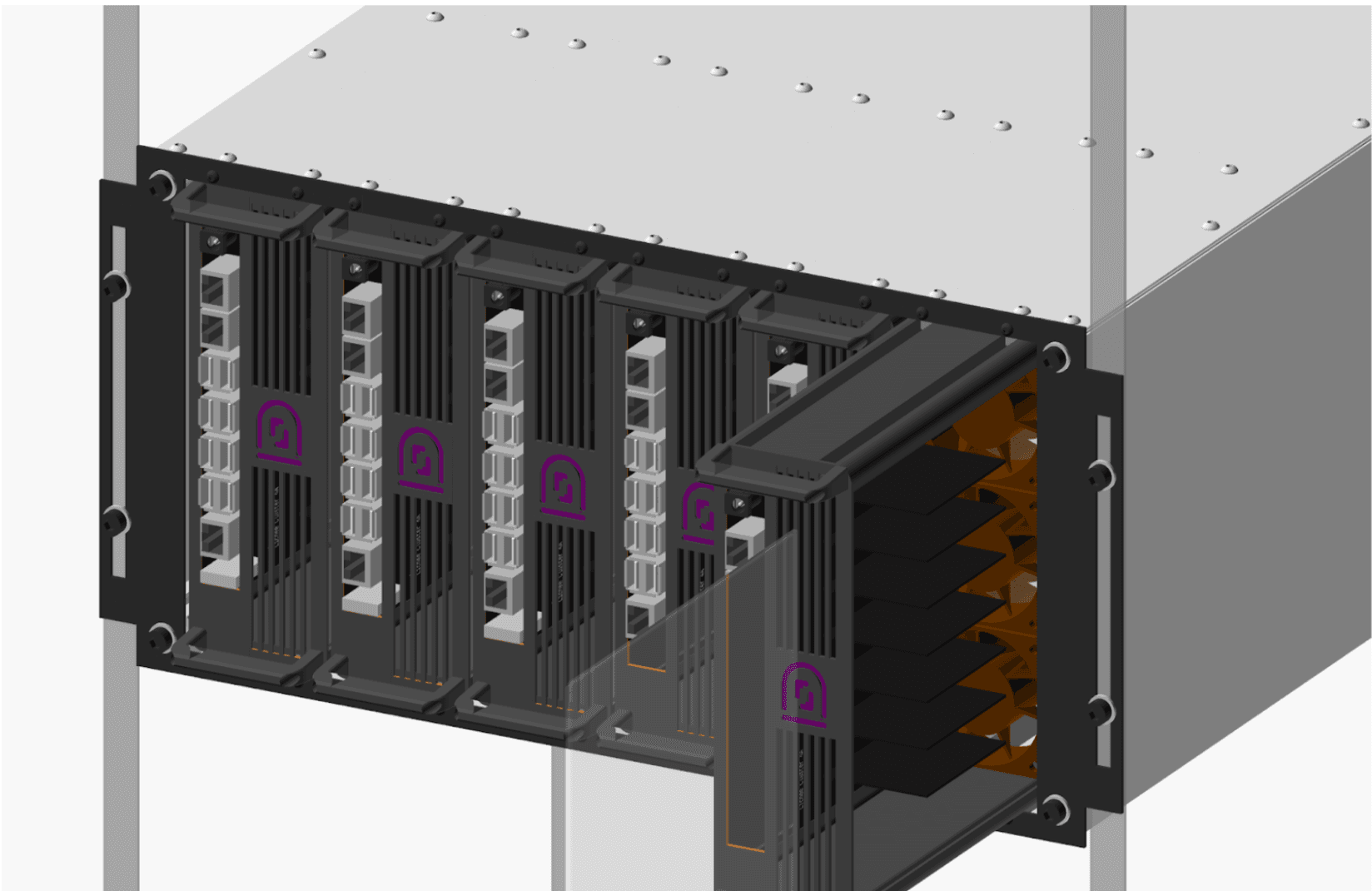

Blade design and 3D modeling

The clusterboards use the mini-ITX format. While compact and practical for development, this format is not suitable for direct datacenter integration, simply because it cannot be mounted in a standard rack. To make these clusterboards production-ready, we had to carry out a full mechanical design effort. The goal was to combine:

- a high number of servers per height unit,

- controlled power management,

- and effective air-cooling.

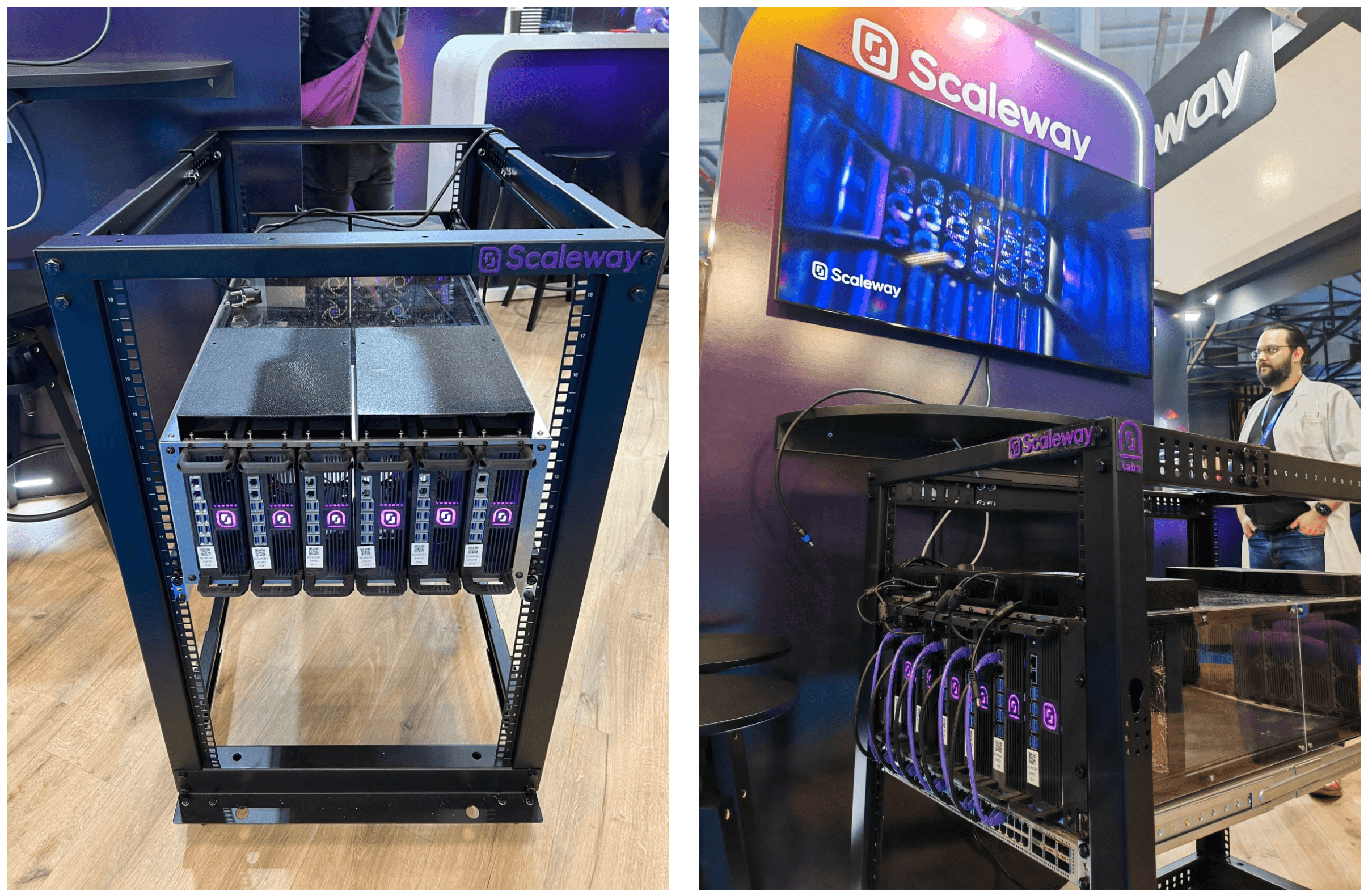

To achieve this, we designed and 3D-printed custom blades capable of housing our clusterboards. We developed a 5U chassis that can hold twelve blades simultaneously — six in the front and six in the back. This approach allows us to integrate our RISC-V servers into standard datacenter racks while maximizing density, with up to 84 dedicated servers per chassis.

Energy efficiency

In terms of power consumption, a clusterboard uses around 55W on average, or roughly 660W for a full chassis. By comparison, a typical x86 Dell server (Dell R660) consumes about 350W per 1U, which would amount to 1750W for 5U of Dell servers — with far fewer servers than we host in our RISC-V chassis. Of course, the raw computing power of a latest-generation x86 processor remains significantly higher today.

But that is not the real point of the comparison. What matters here is efficiency — the ratio between performance and energy consumption.

This RISC-V-based design, combined with a compact mechanical structure and low power usage, makes for excellent server density per rack.

All the blades containing our RISC-V servers were fully 3D-printed on our premises in Paris, while the chassis was laser-cut from metal sheets. Every manufacturing step was carried out in-house, allowing us to refine and improve the design at each iteration. Material choice was also important, since it had to be certified to meet datacenter requirements.

Integration into the cloud infrastructure

Software challenges: networking, BMC, and U-Boot

Once the hardware side was in place, integrating these RISC-V servers into our Elastic Metal offering raised software challenges, especially at the network level. Each module had to be isolated so that no communication could occur between modules on the same clusterboard.

We also had to integrate a BMC (Board Management Controller), a system that allows a server to be managed remotely — power-on, monitoring, rebooting — independently of the operating system. It is typically a separate component positioned next to the hardware it monitors. For our needs, we had to customize OpenBMC, an open-source firmware project originally promoted by Meta, and integrate an IPMI controller adapted to the hardware.

This protocol serves as an emergency management interface, allowing us to control a server’s power supply.

Provisioning system and production rollout

The final challenge was scaling all of these deployment steps. In addition to manufacturing the chassis and blades in series, we set up a provisioning system able to handle firmware flashing, per-unit quality control, registration, and declaration in Scaleway’s production system.

Once that process was fully in place, the servers could leave the workshop and be racked in our datacenters, turning an R&D experiment into a real cloud offering.

Launch of the EM-RV1 Bare Metal offering

EM-RV1 specifications

Once the first RISC-V servers had been integrated into our production infrastructure, what had started as a proof of concept in the R&D lab became a real product for our customers. On February 29, 2024, we officially launched our RISC-V Bare Metal offering with the EM-RV1, featuring:

- A system based on the T-Head 1520 SoC, with:

- a 4-core 1.85 GHz RISC-V CPU (C910)

- an OpenCL/Vulkan-compatible GPU

- a 4 TOPS NPU for AI

- 16 GB of LPDDR4 RAM

- 128 GB of eMMC storage

- A 100 Mbit/s network connection

- Cloud-standard OS images: Debian, Ubuntu, and Alpine

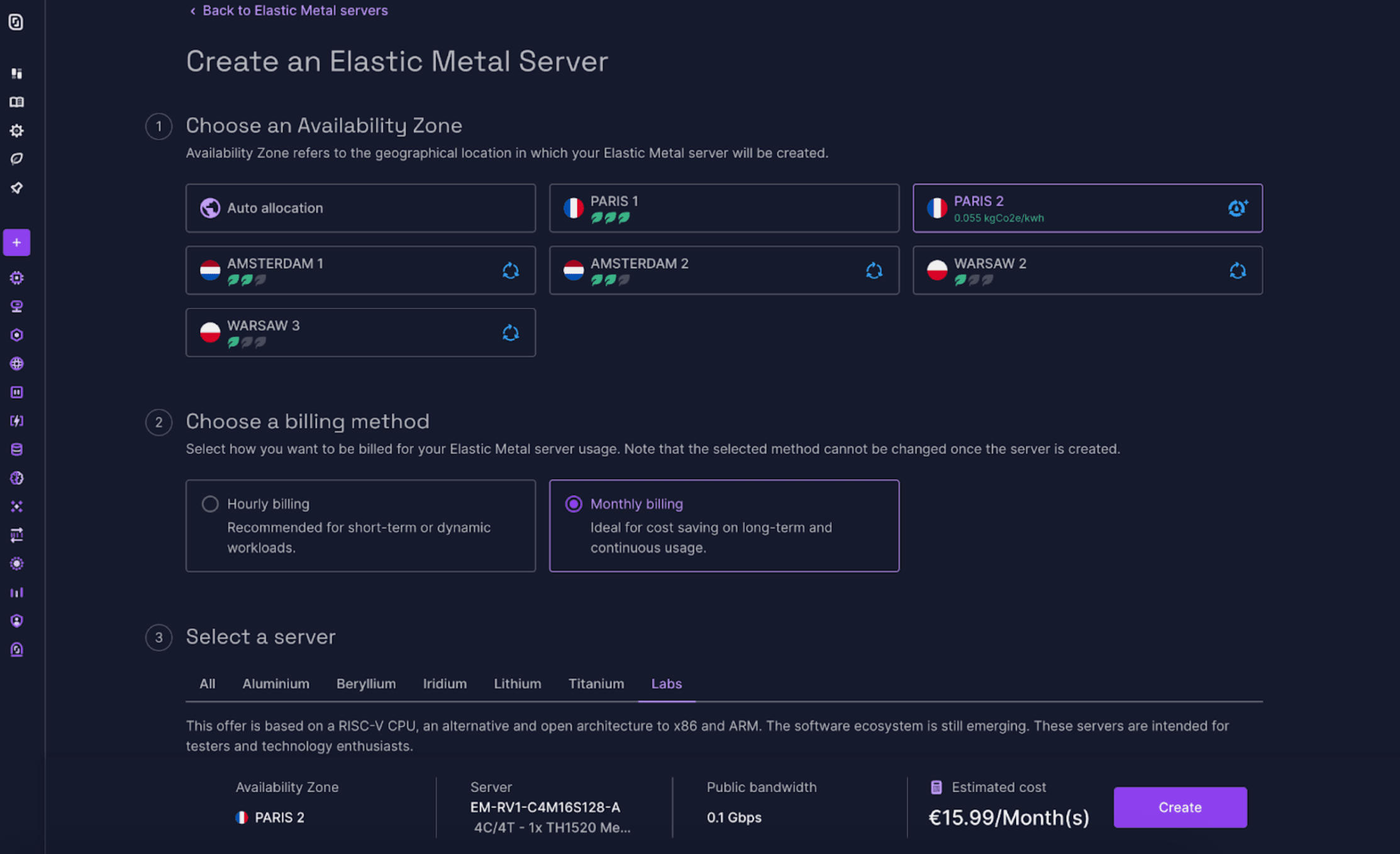

Available on the Scaleway console:

- Product: Elastic Metal

- Zone: Paris 2

- Tab: Labs

Looking back: success and improvements

The success was immediate, and the servers quickly sold out, forcing us to put more into production.

But we did not stop there. We kept expanding the offering by adding Fedora, Kosmos, and even Android 12 thanks to the Damo Academy team — an Alibaba division specialized in RISC-V processors, which made a major contribution to the development of RISC-V SoCs. For the more adventurous, we even made the servers’ serial console available — and with it, access to the bootloader — making it possible to install the most exotic operating systems.

Looking for a challenge? Why not try installing Arch Linux directly from the serial console?

And because we like to do things properly, we also added features such as Flexible IP and Private Network, giving customers even more ways to customize their configuration, with full control over their network and IPs.

The “GhostWrite” vulnerability in C910 RISC-V chips

But innovation rarely comes without obstacles. Researchers from the CISPA Helmholtz Center for Information Security alerted us to a vulnerability called “GhostWrite” in the C910 RISC-V chips deployed in our cloud.

This vulnerability allowed unprivileged attackers to perform arbitrary memory writes using an instruction, vse1024.v, which is part of the vector extension (V), potentially leading to privilege escalation up to Ring 0.

It is important to note, however, that this instruction is not part of the official version of the RVV 0.7.1 vector extension draft currently used in our servers. This version is an intermediate standard that is not compatible with the final RVV 1.0 release. Because RVV 0.7.1 is barely used in production, disabling this vector extension has no significant impact on server performance.

In response, we disabled the V vector extension by default on our servers through a kernel update, even before the vulnerability was made public. Instructions to re-enable the extension are available in our documentation.

In practical terms, what can you do with a RISC-V server?

Everything you would do on a conventional architecture. At the time our product launched, a little over 96% of Linux packages (Debian, Fedora, Ubuntu) were compatible with RISC-V; today, that figure is around 98%. So web servers, databases, Kubernetes clusters — it all works.

Compilers such as GCC and LLVM are continuing to evolve and improve for RISC-V, and while Docker image support is still progressing, it is already possible to build and test software across multiple architectures with multi-arch CI/CD pipelines. In short, RISC-V gives you a way to deploy, test, and innovate within an open ecosystem that is rapidly maturing.

Why, despite its advantages, has RISC-V not yet been adopted at scale?

Despite its strengths, RISC-V remains a young technology compared with x86 and ARM, which have been entrenched for decades. Its adoption still faces two major obstacles:

- The risk of fragmentation: as explained above, this is the main concern. If the standard is too flexible, software may become incompatible from one processor to another.

- Ecosystem maturity: the hardware exists, but the software still has to catch up. Migrating critical applications takes time and requires significant investment to close the optimization gap. To help share those development costs, major players including Google, Samsung, and Nvidia have joined forces in the RISE project (RISC-V Software Ecosystem).

The future of RISC-V at Scaleway

Launching the first RISC-V Bare Metal offering was only the beginning.

The ecosystem is industrializing rapidly, with the arrival of high-performance servers such as Sophgo’s SRA3-40, native support from Ubuntu for the RVA23 standard, and the creation of working groups such as the Data Center SIG within RISC-V International.

In May 2024, Scaleway joined the RISC-V Foundation, confirming our commitment to the architecture and our intention to continue developing and offering innovative services based on RISC-V. We also regularly speak at major events such as the RISC-V Summit.

The age of RISC-V in the datacenter has begun, and we are proud to be playing a full part in it.

Many thanks to my colleagues at Scaleway Labs — Antoine Blin, Antoine Radet, Cédric Courtaud, and Ludovic Le Frioux — for their valuable advice and careful review of this article.