Exposing Kubernetes services to the internet

By default, Kubernetes clusters are not exposed to the internet. This prevents external users from being able to access the application deployed in your cluster.

There are a number of different ways to expose your cluster to the internet. In this documentation, we explain and compare different ways to do so, and provide information to help you get started.

Comparison of cluster exposure methods

In Kubernetes, you generally need to use a Service to expose an application in your cluster to the internet. A service groups together pods performing the same function (e.g. running the same application) and defines how to access them.

The most basic type of service is clusterIP, but this only provides internal access, from within the cluster, to the defined pods. The NodePort and LoadBalancer services both provide external access. Ingress (which is not a service but an API object inside a cluster) combined with an explicitly-created ingress controller is another way to expose the cluster.

See the table below for more information.

| Method | Description | Suitable for | Limitations |

|---|---|---|---|

| Cluster IP Service | • Provides internal connectivity between cluster components. • Has a fixed IP address, from which it balances traffic between pods with matching labels. | Internal communication between different components within a cluster | Cannot be used to expose an application inside the cluster to the public internet. |

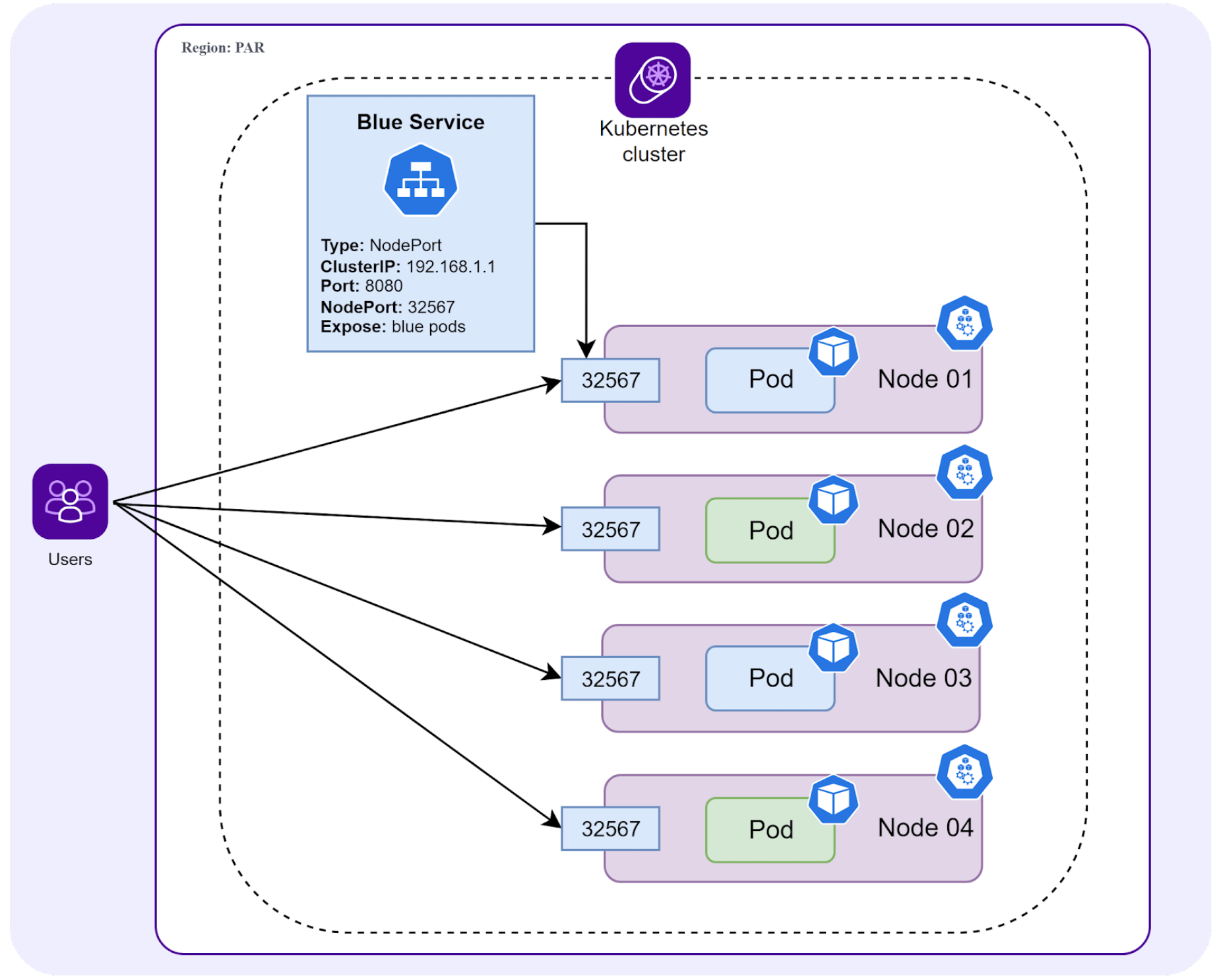

| Node Port Service | • Exposes a specific port on each node of a cluster. • Forwards external traffic received on that port to the right pods. | • Exposing single-node, low-traffic clusters to the internet for free • Testing. | • Not ideal for production or complex clusters • Single point of failure. • Not all port numbers can be opened. |

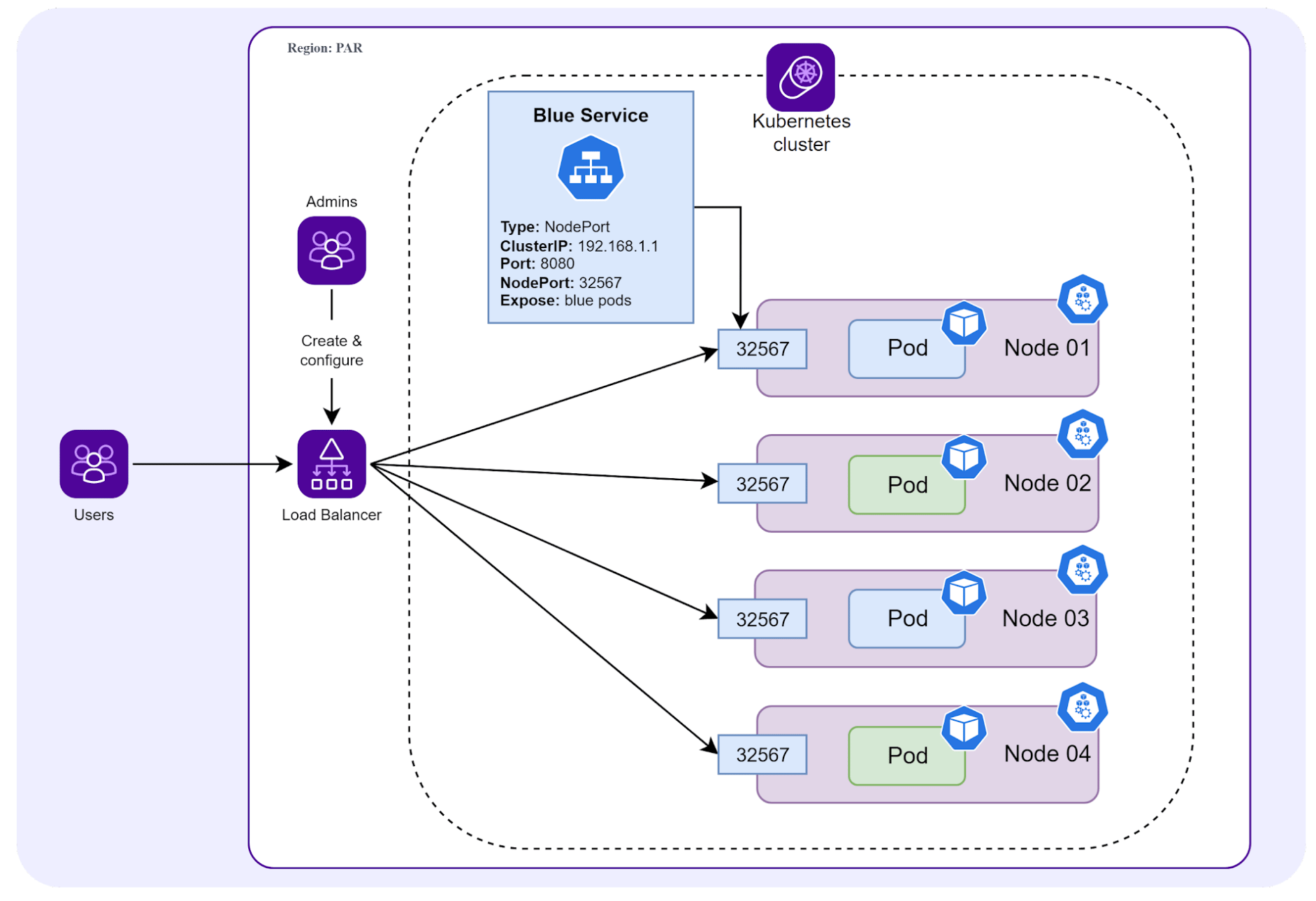

| Load Balancer Service | • Creates a single, external Load Balancer with a public IP. • External LB forwards all traffic to the corresponding LoadBalancer service within the cluster. • LoadBalancer service then forwards traffic to the right pods. • Operates at the L4 level. | • Exposing a service in the cluster to the internet. • Production envs (highly available). • Dealing with TCP traffic. | • Each service in the cluster needs its own Load Balancer (can become costly). |

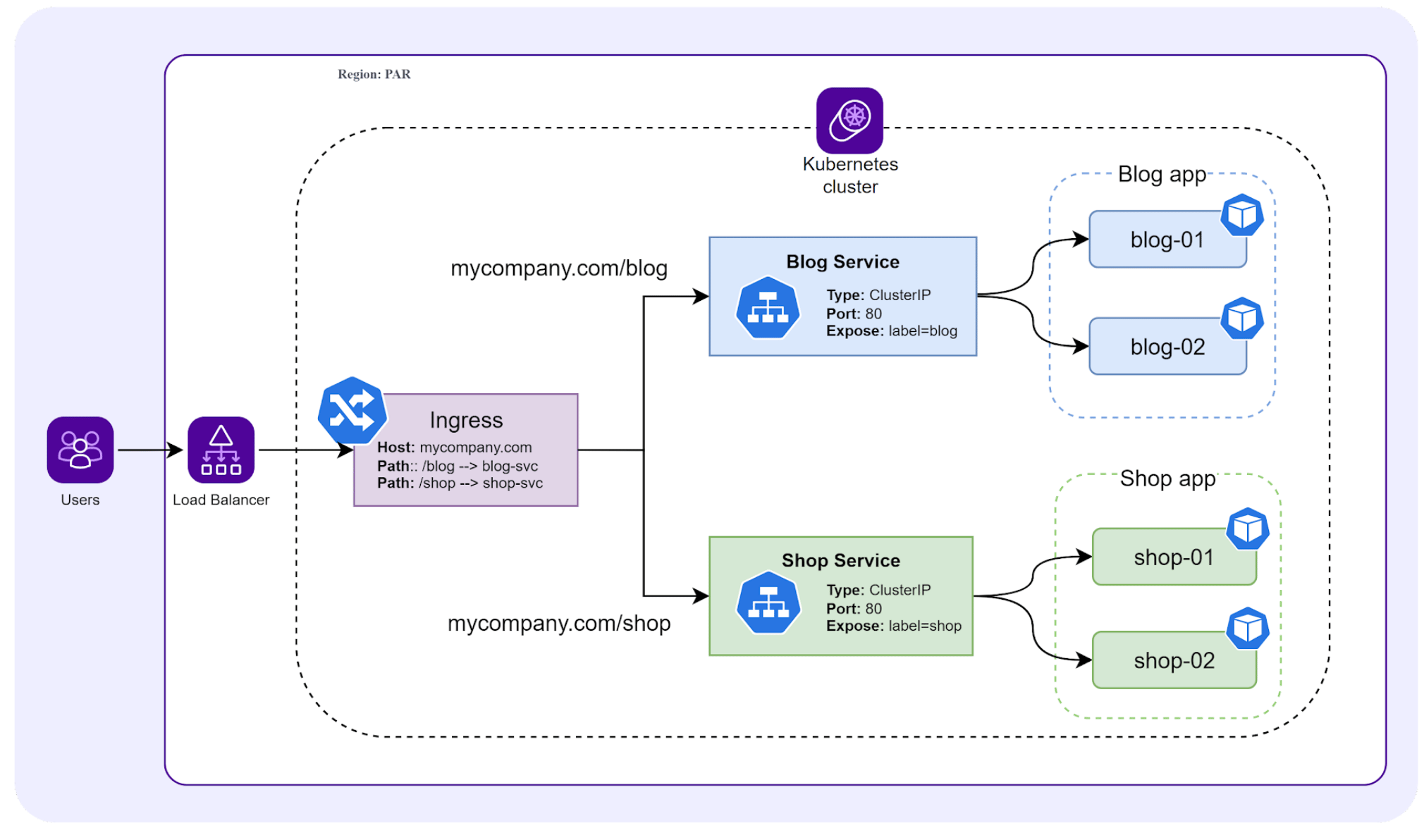

| Ingress | • A native resource inside the cluster (not a service). • Ingress controller receives a single, external public IP, usually in front of a spun-up external HTTP Load Balancer. • Uses a set of rules to forward web traffic (HTTP(S)) to the correct service out of multiple services within the cluster. • Each service then sends the traffic to a suitable pod. • Operates at the L7 level. | • Clusters with many services. • Dealing with HTTP(S) traffic. | • Requires an ingress controller (not included by default, must be created). • Designed for HTTP(S) traffic only (more complicated to configure for other protocols). |

Our webinar may also be useful to you when considering how to expose your cluster. From 5m47 to 13m43, the different methods to expose a cluster are described and compared.

NodePort Service

For more information and practical help with creating a NodePort Service, check out the following resources:

- Exposing a service via NodePort - Tutorial

- Exposing a service via NodePort - Video demonstration (from 23m52)

- Comparison of NodePort vs LoadBalancer

LoadBalancer Service

For more information and practical help with creating a LoadBalancer Service, check out the following resources:

- Creating and configuring a Load Balancer service

- Getting started with Kubernetes Load Balancers - Tutorial

- Getting started with Kubernetes Load Balancers - Video demonstration

Ingress

For more information and practical help with setting up ingress, check out the following resources:

- How to deploy an ingress controller

- Using a Load Balancer to expose your ingress controller

- Configuring a Load Balancer for your Kubernetes applications - Webinar - Deep dive and practical demonstration of how to create an ingress controller with a Load Balancer for your cluster from the 14-minute mark to the 48-minute mark.

Ingress or LoadBalancer?

When considering whether to expose your application via ingress (and an ingress controller) or a LoadBalancer service, consider the following:

- A Load Balancer on its own may be sufficient if you have one or a few services that you want to expose in your cluster. Each service will need its own external Load Balancer and LoadBalancer service.

- A Load Balancer is also most appropriate for dealing with external TCP traffic.

- Ingress controller with an external Load Balancer may be most appropriate if you have multiple services running in your cluster, and you want to direct HTTP traffic from a single external access point, to different services within your cluster, based on a set of rules.