How to upgrade the Kubernetes version on a Kapsule cluster

You can either upgrade your Kubernetes Kapsule cluster directly from the Scaleway console, or upgrade your cluster to the next minor version by using the CLI. The CLI section of this how-to guide also provides additional mandatory information for checking important components before proceeding with the upgrade of your cluster.

Upgrading a Kapsule cluster from the Scaleway console

Before you start

To complete the actions presented below, you must have:

- A Scaleway account logged into the console

- Owner status or IAM permissions allowing you to perform actions in the intended Organization

- Created a Kubernetes Kapsule cluster running on a Kubernetes version older than the latest release

- Click Kubernetes under Containers on the side menu. A list of your Kubernetes Kapsule clusters displays.

- From the drop-down menu, select the geographical region you want to manage.

- Click the cluster name you wish to upgrade the Kubernetes version for. The cluster information page displays.

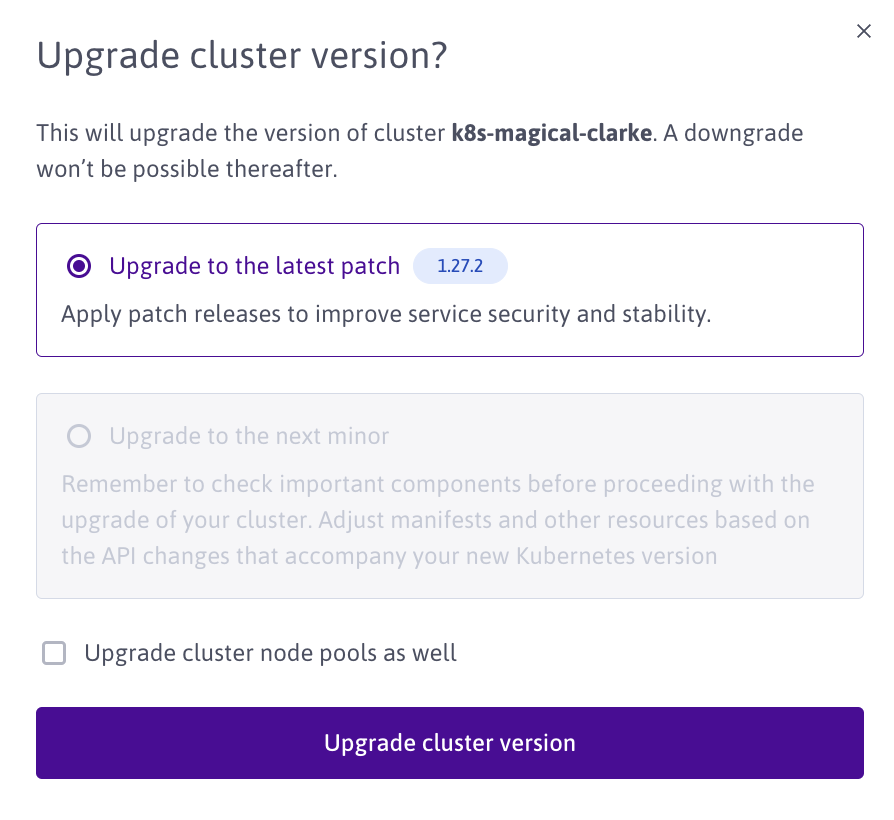

- Click Upgrade next to the Kubernetes version of your cluster. A pop-up displays.

- Select the latest patch or next minor version to upgrade to. Tick the Upgrade cluster node pools as well checkbox if you want to upgrade the version of Kubernetes on the node pools in your cluster to the same version.

- Click Upgrade.

Upgrading a Kapsule cluster to the next minor version using the CLI

Before you start

To complete the actions presented below, you must have:

-

A working CLI with your credentials set up

This procedure will upgrade your Kubernetes Kapsule cluster to the latest k8s version available on the Kapsule API.

First, it is essential to verify that the most recent version of Kapsule adequately supports your workload. We maintain a compatibility matrix for various components, as your current cluster might use components that are deprecated or unavailable in the latest version.

For further details, consult our version policy.

We recommend you read the Kubernetes changelog to stay informed on the latest version upgrades.

Effective upgrade

From here, two options are available: you are either upgrading one minor version or multiple ones.

One minor version

This option is the most straightforward and requires you to first upgrade your control plane.

scw k8s cluster upgrade $CLUSTER_ID version=$NEW_K8S_VERSIONAdditionally, you can upgrade one pool independently by running the following command:

scw k8s pool upgrade $POOL_ID version=$NEW_K8S_VERSIONIf you wish to migrate your workload manually, you can do so by following the steps described in the runtimes section.

scw k8s pool create zone=$OLD_POOL_ZONE size=$SIZE_OF_YOUR_OLD_POOL version=$NEW_CLUSTER_VERSION cluster-id=$CLUSTER_IDMultiple minor versions

The process is quite similar to the previous one except you need to repeat the steps for each minor version.